Team-Level vs Individual Metrics: Where AI Crosses the Line

AI didn’t create the measurement problem, it exposed where leadership draws the line.

I’ve had the privilege of working with truly exceptional engineering teams during my career.

Some of them were simply average or “good enough” teams.

But some of them really stood out. Those were the teams that built category-defining products like Google first and Flickr later. What they had in common is that they shipped under pressure and operated with intensity and pride.

Actually at one of those firms is where I met my co-founder Dave.

What I can tell is one thing those teams all had in common: They had nothing to hide. And that means, not having to hide neither from leadership or each other.

That experience has shaped how I think about metrics, and especially now that AI makes it possible to measure almost everything.

The debate about team-level vs individual metrics is not theoretical to me. I’ve seen both done well. I’ve seen both abused. And I’ve seen how quickly measurement systems can distort culture when leaders lose clarity about what they’re actually trying to achieve.

Great Teams Don’t Fear Visibility

In high-performing environments, transparency is not threatening. It’s liberating.

When everyone knows:

what good looks like,

where complexity lives,

who is carrying what,

and how delivery is evolving over time,

conversations become cleaner and there is no room for narrative manipulation.

The strongest engineers I’ve worked with never asked to be protected from data. They asked for clarity. They wanted to understand their impact relative to the system they operated in.

They didn’t fear individual visibility because they trusted that it would be contextualized within team reality.

That’s the difference.

Where Teams Hide and Why

The resistance to individual metrics often comes from a legitimate place. Poorly designed measurement systems can absolutely become toxic. That’s a discussion I have with Dave regularly and that pushed us to build Pensero.

If you reduce engineers to commit counts or ticket closures, you will damage collaboration.

If you rank people mechanically, you will create gaming.

But avoiding individual visibility entirely creates a different failure mode.

When everything is averaged at the team level:

Disproportionate contributors disappear into the mean.

Quiet underperformance becomes socially protected.

Calibration becomes subjective.

Leadership decisions rely on interpretation instead of evidence.

I’ve seen executive teams rely heavily on perception-based inputs, sentiment surveys, or operational hygiene metrics. These tools are comfortable. They produce digestible dashboards. They allow a story to be told.

But perception is not performance.

And curated narratives are not accountability.

AI Makes the Line Clearer and Riskier

AI has introduced a new dimension.

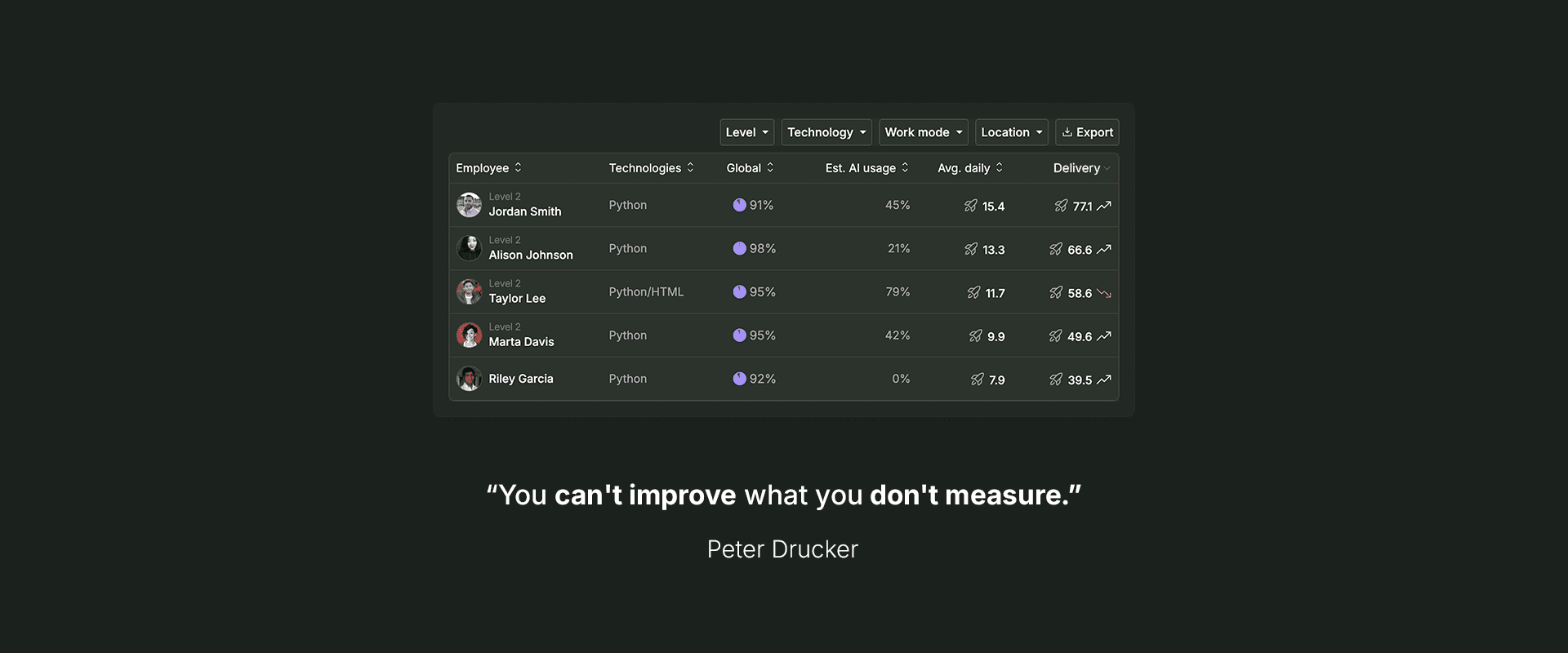

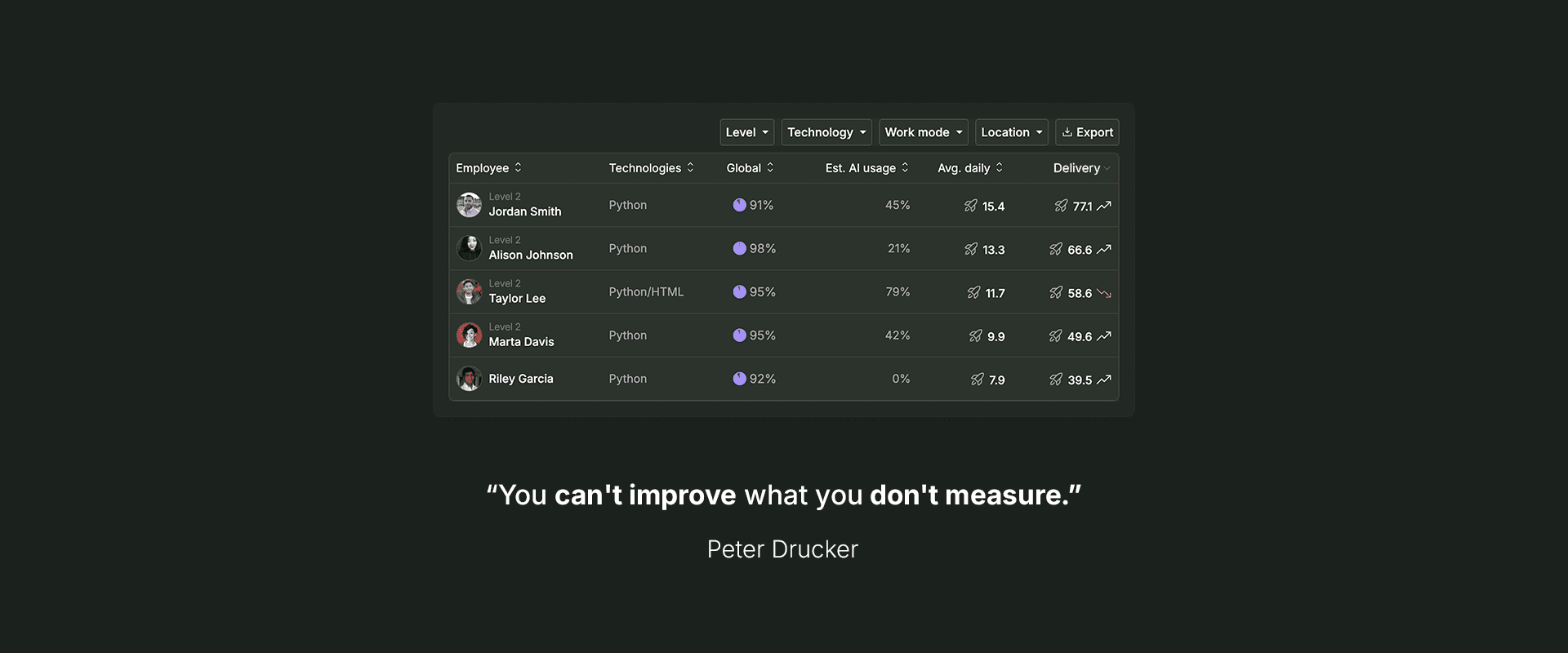

On one hand, it allows us to analyze real delivery artifacts directly: code evolution, refactoring depth, review patterns, collaboration networks, flow stability. Signals that previously required manual interpretation can now be observed continuously.

On the other hand, AI also makes it easy to generate opaque scores, automated rankings, and synthetic performance labels. This is where the line is crossed.

Specifically, AI crosses the line when it becomes:

A surveillance mechanism instead of an analytical lens.

An automated judge instead of a contextual intelligence layer.

A black box score instead of an auditable system of record.

In my experience, the healthiest teams don’t need protection from transparency. They need protection from distortion since the main goal is to understand contribution within system context.

Team-Level vs Individual Is the Wrong Framing

The real question is not:

“Should we measure teams or individuals?”

It is:

“Are we willing to measure reality?”

Engineering performance is produced by systems.

Systems are composed of individuals.

If you only measure the system, you cannot calibrate fairly.

If you only measure individuals, you ignore systemic constraints.

Great teams understand this intuitively.

They know who carries architectural risk, who unblocks others, who stabilizes production and where complexity accumulates.

The difference is whether leadership and the team is willing to look at the same truth without filters.

Transparency Is a Leadership Choice

Some executives prefer abstraction: They rely on sentiment, emphasize operational metrics and curate narratives upward.

I have to admit myself that it feels safer: It reduces friction and avoids uncomfortable calibration conversations.

But I am the kind of leader who chooses transparency to see:

how AI-assisted development is affecting quality,

where delivery flow degrades,

how contribution is distributed,

whether alignment exists in reality, not only in storytelling.

That choice defines culture more than any value statement.

The best engineering organizations I’ve seen were not obsessed with surveillance. They were obsessed with excellence. And as I have said before, excellence requires measurement as shared truth.

AI should serve that purpose too.

If your team has nothing to hide, transparency strengthens trust.

If transparency feels threatening, the problem is rarely the metric.

It’s what the metric might reveal.

I’ve had the privilege of working with truly exceptional engineering teams during my career.

Some of them were simply average or “good enough” teams.

But some of them really stood out. Those were the teams that built category-defining products like Google first and Flickr later. What they had in common is that they shipped under pressure and operated with intensity and pride.

Actually at one of those firms is where I met my co-founder Dave.

What I can tell is one thing those teams all had in common: They had nothing to hide. And that means, not having to hide neither from leadership or each other.

That experience has shaped how I think about metrics, and especially now that AI makes it possible to measure almost everything.

The debate about team-level vs individual metrics is not theoretical to me. I’ve seen both done well. I’ve seen both abused. And I’ve seen how quickly measurement systems can distort culture when leaders lose clarity about what they’re actually trying to achieve.

Great Teams Don’t Fear Visibility

In high-performing environments, transparency is not threatening. It’s liberating.

When everyone knows:

what good looks like,

where complexity lives,

who is carrying what,

and how delivery is evolving over time,

conversations become cleaner and there is no room for narrative manipulation.

The strongest engineers I’ve worked with never asked to be protected from data. They asked for clarity. They wanted to understand their impact relative to the system they operated in.

They didn’t fear individual visibility because they trusted that it would be contextualized within team reality.

That’s the difference.

Where Teams Hide and Why

The resistance to individual metrics often comes from a legitimate place. Poorly designed measurement systems can absolutely become toxic. That’s a discussion I have with Dave regularly and that pushed us to build Pensero.

If you reduce engineers to commit counts or ticket closures, you will damage collaboration.

If you rank people mechanically, you will create gaming.

But avoiding individual visibility entirely creates a different failure mode.

When everything is averaged at the team level:

Disproportionate contributors disappear into the mean.

Quiet underperformance becomes socially protected.

Calibration becomes subjective.

Leadership decisions rely on interpretation instead of evidence.

I’ve seen executive teams rely heavily on perception-based inputs, sentiment surveys, or operational hygiene metrics. These tools are comfortable. They produce digestible dashboards. They allow a story to be told.

But perception is not performance.

And curated narratives are not accountability.

AI Makes the Line Clearer and Riskier

AI has introduced a new dimension.

On one hand, it allows us to analyze real delivery artifacts directly: code evolution, refactoring depth, review patterns, collaboration networks, flow stability. Signals that previously required manual interpretation can now be observed continuously.

On the other hand, AI also makes it easy to generate opaque scores, automated rankings, and synthetic performance labels. This is where the line is crossed.

Specifically, AI crosses the line when it becomes:

A surveillance mechanism instead of an analytical lens.

An automated judge instead of a contextual intelligence layer.

A black box score instead of an auditable system of record.

In my experience, the healthiest teams don’t need protection from transparency. They need protection from distortion since the main goal is to understand contribution within system context.

Team-Level vs Individual Is the Wrong Framing

The real question is not:

“Should we measure teams or individuals?”

It is:

“Are we willing to measure reality?”

Engineering performance is produced by systems.

Systems are composed of individuals.

If you only measure the system, you cannot calibrate fairly.

If you only measure individuals, you ignore systemic constraints.

Great teams understand this intuitively.

They know who carries architectural risk, who unblocks others, who stabilizes production and where complexity accumulates.

The difference is whether leadership and the team is willing to look at the same truth without filters.

Transparency Is a Leadership Choice

Some executives prefer abstraction: They rely on sentiment, emphasize operational metrics and curate narratives upward.

I have to admit myself that it feels safer: It reduces friction and avoids uncomfortable calibration conversations.

But I am the kind of leader who chooses transparency to see:

how AI-assisted development is affecting quality,

where delivery flow degrades,

how contribution is distributed,

whether alignment exists in reality, not only in storytelling.

That choice defines culture more than any value statement.

The best engineering organizations I’ve seen were not obsessed with surveillance. They were obsessed with excellence. And as I have said before, excellence requires measurement as shared truth.

AI should serve that purpose too.

If your team has nothing to hide, transparency strengthens trust.

If transparency feels threatening, the problem is rarely the metric.

It’s what the metric might reveal.